# call each cluster to run the code to import the library

Rdd = sc.parallelize() # assuming 4 worker nodes If in a cluster environment such as in AWS EMR, you can try: import os For example, if you typically use Python 3 but use Python 2 for pyspark, then you would not have shapely available for pyspark. You may need to check your environment variables for your default python environment(s). If on your laptop/desktop, pip install shapely should work just fine. laptop/desktop) or in a cluster environment (e.g. > df2 = df.withColumn('bounds1', udf_parse_region('bounds'))

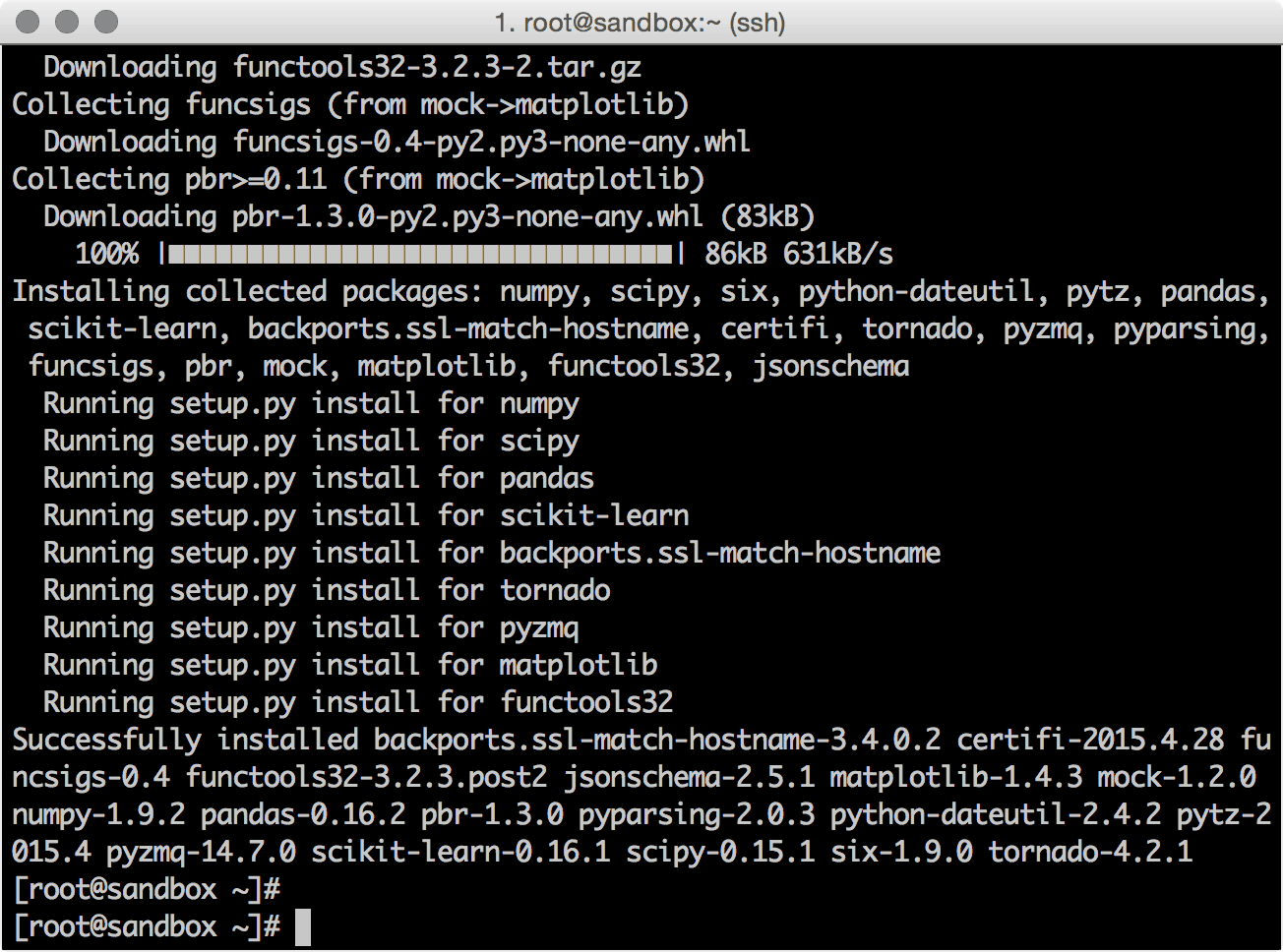

> df = sqlContext.sql('select id, bounds from limit 10') is not enabled so recording the schema version 1.2.0ġ6/09/06 22:18:34 WARN ObjectStore: Failed to get database default, returning NoSuchObjectException > udf_parse_region=udf(parse_region, StringType())ġ6/09/06 22:18:34 WARN ObjectStore: Version information not found in metastore. return str(load_wkt('POLYGON ((%s))' % ','.join(coordinate_list)).wkt) coordinate_list = map(reverse_coordinate, region.split(', ')) reverse_coordinate = lambda coord: ' '.join(reversed(coord.split(':'))) from shapely.wkt import loads as load_wkt > from shapely.wkt import loads as load_wkt SparkContext available as sc, HiveContext available as sqlContext. Type "help", "copyright", "credits" or "license" for more information. I have tested both scenarios.įollowing is a sample pyspark and shapely code (Spark SQL UDF) to ensure above commands are working as expected: Python 2.7.10 (default, Dec 8 2015, 18:25:23) Note: Instead of running as a bootstrap-actions this script can be executed independently in every node in a cluster. Sudo /bin/sh -c 'echo -e "\nexport LD_LIBRARY_PATH=/usr/lib" > /home/hadoop/.bashrc' Sudo /bin/sh -c 'echo "/usr/lib/local" > /etc/ld.so.conf' Sudo /bin/sh -c 'echo "/usr/lib" > /etc/ld.so.conf'

Sudo cp /home/hadoop/geos-bin/lib/* /usr/lib configure -prefix=$HOME/geos-bin

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. Archives

March 2023

Categories |

RSS Feed

RSS Feed